Abstract

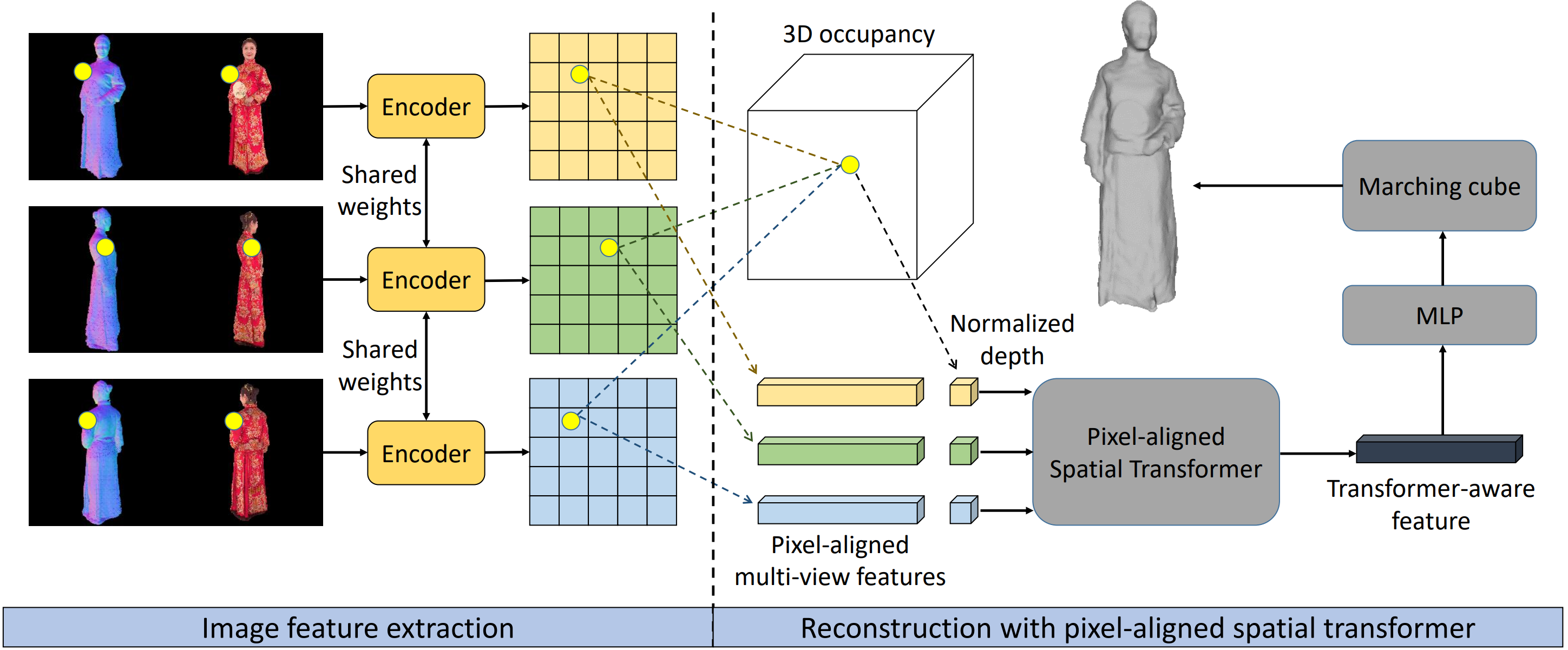

In this paper, we aim to address the challenge of novel view rendering of human performers that wear clothes with complex texture patterns using a sparse set of camera views. Although some recent works have achieved remarkable rendering quality on humans with relatively uniform textures using sparse views, the rendering quality remains limited when dealing with complex texture patterns as they are unable to recover the high-frequency geometry details that are observed in the input views. To this end, we propose HDhuman, which uses a human reconstruction network with a pixel-aligned spatial transformer and a rendering network with geometry-guided pixel-wise feature integration to achieve high-quality human reconstruction and rendering. The designed pixel-aligned spatial transformer calculates the correlations between the input views and generates human reconstruction results with high-frequency details. Based on the surface reconstruction results, the geometry-guided pixel-wise visibility reasoning provides guidance for multi-view feature integration, enabling the rendering network to render high-quality images at 2k resolution on novel views. Unlike previous neural rendering works that always need to train or fine-tune an independent network for a different scene, our method is a general framework that is able to generalize to novel subjects. Experiments show that our approach outperforms all the prior generic or specific methods on both synthetic data and real-world data. Source code and test data will be made publicly available for research purposes.

Method

Reconstruction stage.

Rendering stage.

Rendering Results on the Virtual Dataset Twindom (6 Views as Input).