Human Pose Transfer by Adaptive Hierarchical Deformation

Jinsong Zhang, Xingzi Liu, Kun Li*

Tianjin University

* Corresponding Author

Abstract

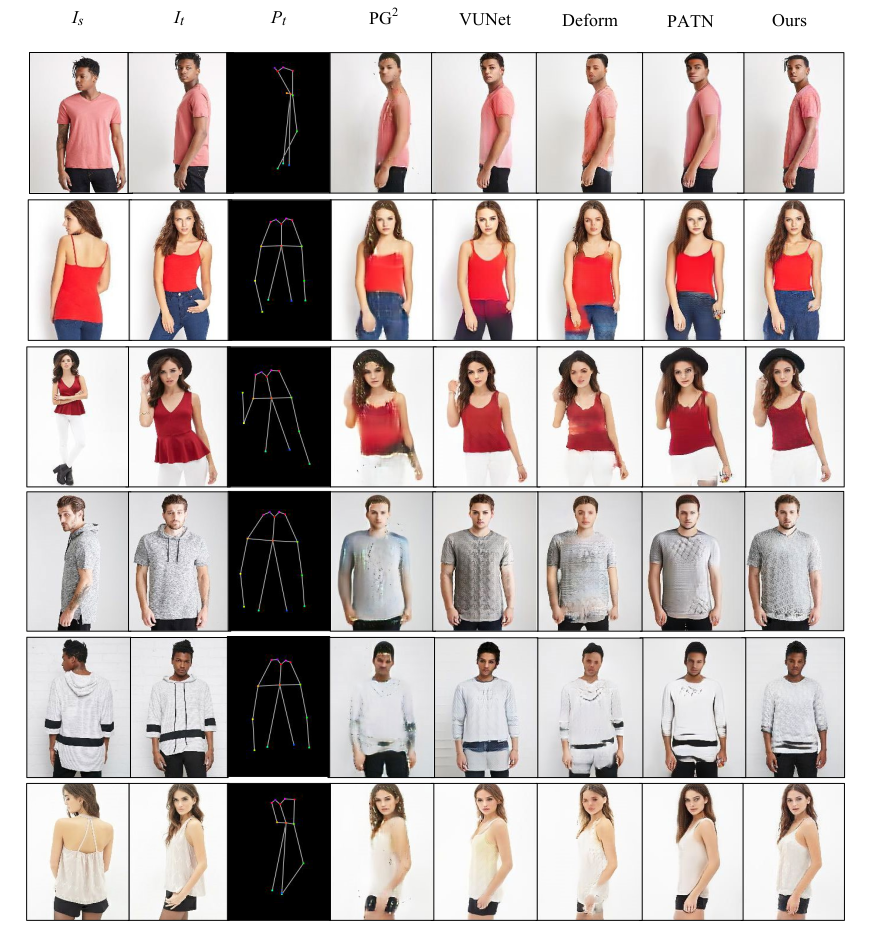

Human pose transfer, as a misaligned image generation task, is very challenging. Existing methods cannot effectively utilize the input information, which often fail to preserve the style and shape of hair and clothes. In this paper, we propose an adaptive human pose transfer network with two hierarchical deformation levels. The first level generates human semantic parsing aligned with the target pose, and the second level generates the final textured person image in the target pose with the semantic guidance. To avoid the drawback of vanilla convolution that treats all the pixels as valid information, we use gated convolution in both two levels to dynamically select the important features and adaptively deform the image layer by layer. Our model has very few parameters and is fast to converge. Experimental results demonstrate that our model achieves better performance with more consistent hair, face and clothes with fewer parameters than state-of-the-art methods. Furthermore, our method can be applied to clothing texture transfer.

[Code] [Paper]

Results

Fig 1. Pose transfer results compared with state-of-the-art methods.

Fig 2. Results of texture transfer using our method.

Technical Paper

Demo Video

Citation

Jinsong Zhang, Xingzi Liu, Kun Li. "Human Pose Transfer by Adaptive Hierarchical Deformation". Computer Graphics Forum 2020

@article{PINet,

author = {Zhang, Jinsong and Liu, Xingzi and Li, Kun},

title = {Human Pose Transfer by Adaptive Hierarchical Deformation},

journal = {Computer Graphics Forum},

volume = {39},

number = {7},

pages = {325-337},

year={2020},

}